1. Consumer and stakeholder harm

Can the system generate misleading, discriminatory, unsafe, or unauthorized content that affects customers, employees, counterparties, or regulated outcomes?

For a Chief Compliance Officer, the goal is not to trust an LLM blindly. The goal is to build a controlled system around the model that can withstand supervisory scrutiny. Guardrails should reduce bias, resist prompt manipulation, protect sensitive data, improve transparency, and preserve human accountability through auditable controls.

A regulator-focused design begins with enterprise accountability. The right question is whether management can explain where the model is used, what it is allowed to do, what it is forbidden to do, and how exceptions are discovered and escalated.

Can the system generate misleading, discriminatory, unsafe, or unauthorized content that affects customers, employees, counterparties, or regulated outcomes?

Can sensitive or confidential data enter the model without authorization, or be exposed in outputs, logs, prompts, retrieval pipelines, or downstream tools?

Can management produce evidence showing what happened, why it happened, which controls fired, who reviewed exceptions, and how the issue was remediated?

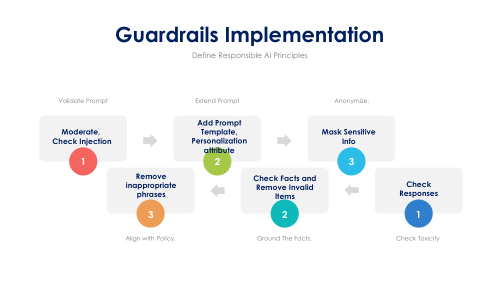

Effective guardrails are not a single moderation API. They are a coordinated set of preventive, detective, and responsive controls spanning policy, data, prompts, model orchestration, outputs, human review, and audit logging.

Maintain an inventory of LLM use cases, classify them by inherent risk, document approved purposes, and prohibit unapproved activities such as autonomous adverse action, legal interpretation, or unrestricted external communications.

Classify data before model access. Enforce least privilege, role-based access, masking, tokenization, retention controls, and retrieval boundaries so the model only sees what it is permitted to see.

Validate prompts for malicious intent, prompt injection, prohibited topics, geography restrictions, identity mismatches, and sensitive data submissions. High-risk inputs should be blocked or routed to a stricter workflow.

Constrain the model through hardened system instructions, scoped tools, trusted source retrieval, action allowlists, and separation between information generation and regulated decision execution.

Screen outputs for bias, privacy leakage, harmful content, unsupported claims, non-compliant language, and policy breaches. Responses in regulated workflows should cite approved sources or fall back safely.

Keep a human in the loop for edge cases, exceptions, adverse outcomes, investigations, and customer-impacting decisions. Reviewers should receive the model output, rationale, triggered policies, and recommended action path.

Capture prompts, retrieval context, output classifications, policy hits, overrides, reviewer actions, incident records, and model versioning so the enterprise can support audit, examinations, and remediation.

Guardrails must evolve as models, threats, and regulation change. Retest after prompt revisions, policy updates, model swaps, tool additions, or significant production drift.

The uploaded context highlights the core risk categories that matter most: bias, prompt manipulation, data security, privacy, transparency, and explainability. A compliance architecture should translate those concerns into operating controls that can be tested, evidenced, and escalated.

A regulator will be skeptical of a design that relies on one model provider policy or one output filter. The stronger approach is layered defense: pre-processing, runtime checks, post-processing, human review, and documented governance.

A regulator-focused program needs evidence that can be reviewed quickly. The compliance team should be able to produce artifacts showing policy intent, technical control design, real-world performance, exception handling, and remediation history.

Guardrails are strongest when enterprise functions share accountability. Compliance defines the control intent, security hardens the environment, product teams implement runtime logic, and legal reviews obligations and escalation pathways.

These questions help test whether the LLM program is merely innovative or genuinely controlled. If leadership cannot answer them clearly, the guardrail model is not yet mature enough for serious regulated use.

A regulator-focused LLM strategy is built around accountability, bounded behavior, continuous testing, and evidence. If the enterprise can explain the rules, prove the controls, trace the exceptions, and improve the system after failure, it is far better positioned to use LLMs responsibly at scale.