How to Use LLM and Build LLM Applications

How to use LLMs and build applications

How to Use LLMs and Build Applications Using LLMs

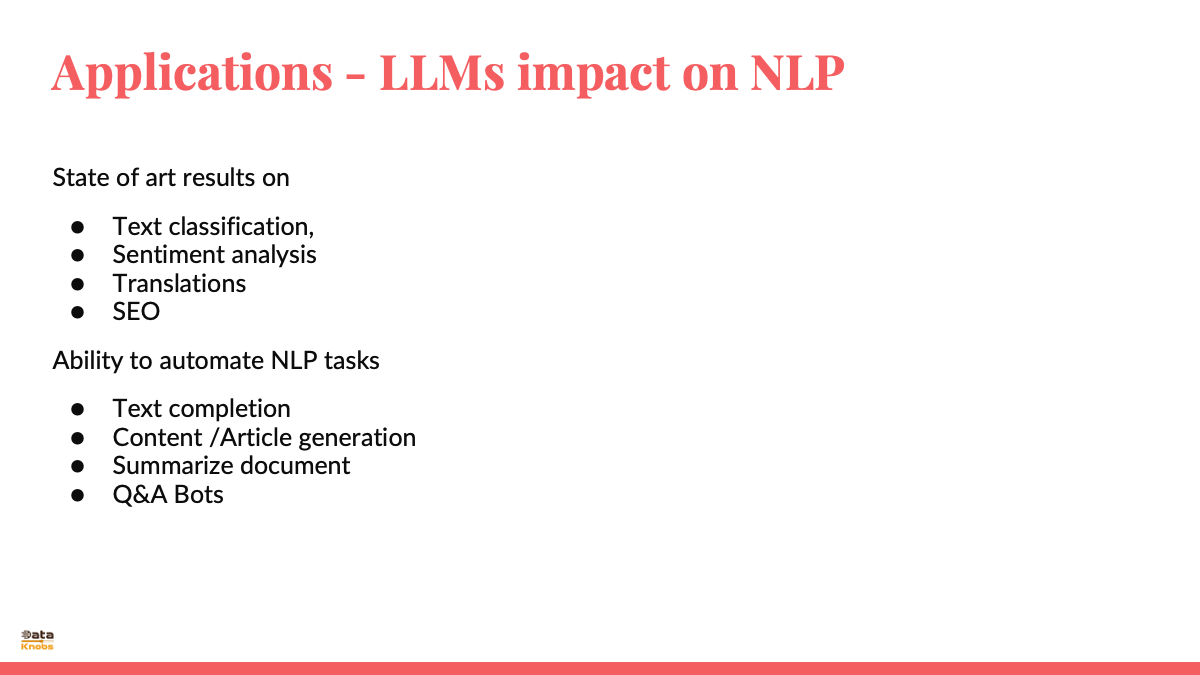

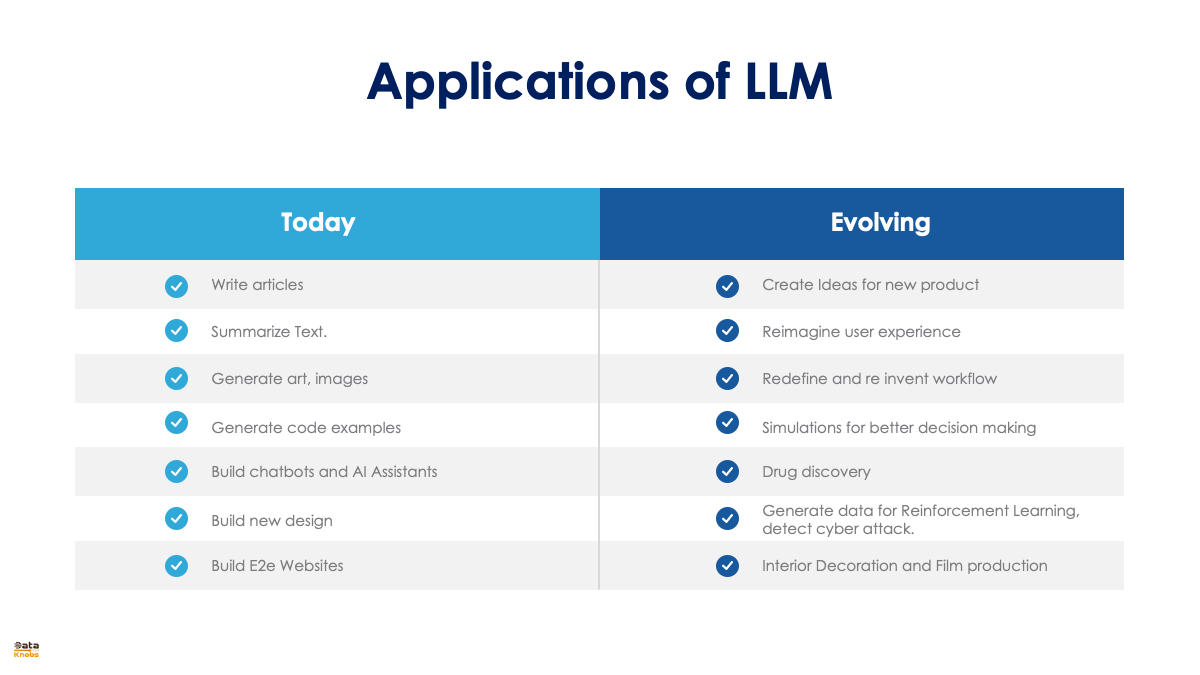

Large Language Models (LLMs) like GPT-3, BERT, and others have revolutionized natural language processing and have a wide range of applications. Here’s a guide on how to use LLMs and build applications using them:

1. Understand the Basics

Key Concepts:

- Tokens: The smallest units of text the model processes.

- Parameters: The weights and biases that the model learns during training.

- Pre-training and Fine-tuning: Pre-training on a large corpus and fine-tuning on specific tasks.

2. Select an LLM

Open Source Options:

- GPT-Neo/GPT-J: Available on platforms like Hugging Face.

- BERT/RoBERTa: Available on the Hugging Face Model Hub.

- T5: Also available on Hugging Face.

Closed Source Options:

- GPT-3: Accessible via OpenAI's API.

- Claude: Accessible via Anthropic’s API.

- DeepMind’s Gopher: Available through specific collaborations.

3. Setup Environment

Install Required Libraries:

pip install transformers torch

Obtain API Keys:

For closed-source models like GPT-3, you'll need an API key from the provider (e.g., OpenAI).

4. Using LLMs for Basic Tasks

Example: Text Generation with GPT-3

import openai

openai.api_key = 'YOUR_API_KEY'

response = openai.Completion.create(

model="text-davinci-003",

prompt="Write a short story about a robot learning to cook.",

max_tokens=150

)

print(response.choices[0].text.strip())

Example: Using Hugging Face Transformers for Text Classification with BERT

from transformers import pipeline

classifier = pipeline('sentiment-analysis')

result = classifier('I love using large language models!')

print(result)

5. Fine-Tuning an LLM

Fine-tuning involves training a pre-trained model on a specific dataset to specialize it for a particular task.

Example: Fine-Tuning BERT for Text Classification

-

Prepare Dataset: Ensure your dataset is in a suitable format (e.g., CSV with text and label columns).

-

Setup Training Script:

from transformers import BertForSequenceClassification, Trainer, TrainingArguments

from datasets import load_dataset

dataset = load_dataset('csv', data_files={'train': 'train.csv', 'test': 'test.csv'})

model = BertForSequenceClassification.from_pretrained('bert-base-uncased')

training_args = TrainingArguments(

output_dir='./results',

num_train_epochs=3,

per_device_train_batch_size=16,

per_device_eval_batch_size=64,

warmup_steps=500,

weight_decay=0.01,

logging_dir='./logs',

logging_steps=10,

)

trainer = Trainer(

model=model,

args=training_args,

train_dataset=dataset['train'],

eval_dataset=dataset['test']

)

trainer.train()

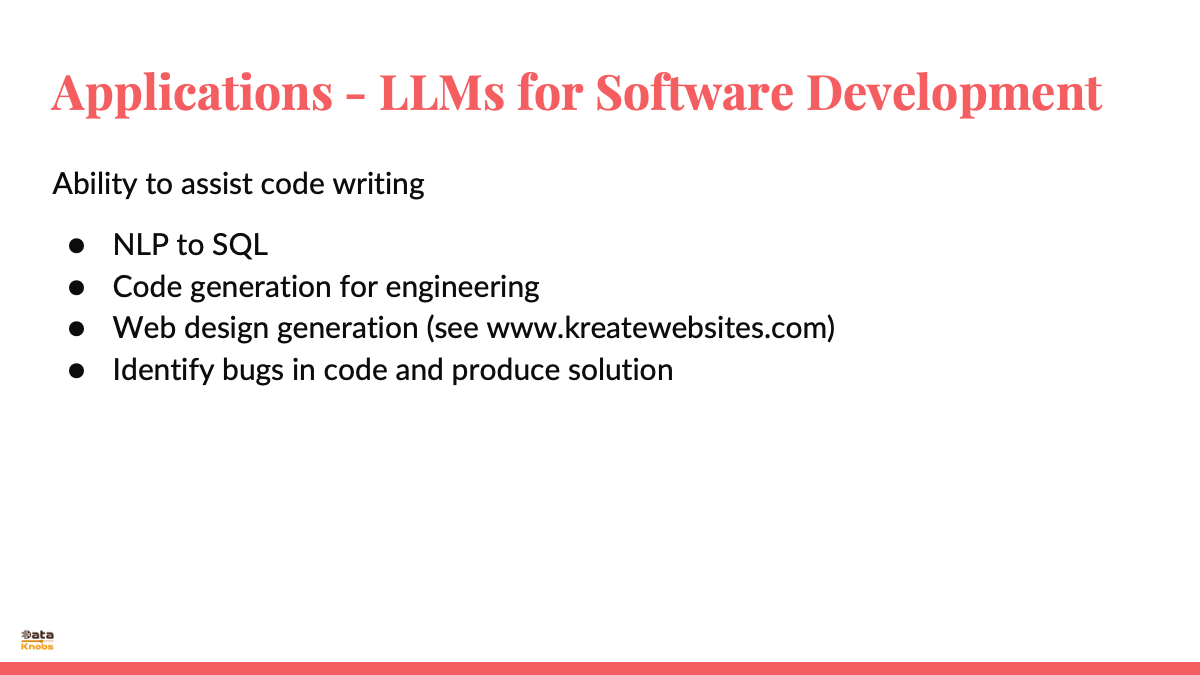

6. Building Applications with LLMs

Chatbot

- Integrate with a Web Framework: Use Flask or Django to create a web interface.

- Create Backend Logic: Use the LLM to process user inputs and generate responses.

from flask import Flask, request, jsonify

import openai

app = Flask(__name__)

openai.api_key = 'YOUR_API_KEY'

@app.route('/chat', methods=['POST'])

def chat():

user_input = request.json['input']

response = openai.Completion.create(

model="text-davinci-003",

prompt=user_input,

max_tokens=150

)

return jsonify(response.choices[0].text.strip())

if __name__ == '__main__':

app.run(debug=True)

Content Generation Tool

- Setup User Interface: Create a web interface where users can input prompts.

- Generate Content: Use the LLM to generate articles, stories, or other content types.

7. Deploying the Application

- Containerization: Use Docker to containerize your application.

- Cloud Deployment: Deploy on cloud platforms like AWS, GCP, or Azure.

- Continuous Integration/Continuous Deployment (CI/CD): Implement CI/CD pipelines to streamline updates and maintenance.

8. Monitoring and Maintenance

- Monitoring: Use tools to monitor the performance and usage of your LLM application.

- Feedback Loop: Collect user feedback to improve the model's performance and relevance.

- Regular Updates: Keep the model updated with new data and retrain periodically to maintain accuracy.

Conclusion

Using and building applications with LLMs involves selecting the right model, setting up the environment, fine-tuning the model if necessary, and integrating it into a user-facing application. Whether using open-source or closed-source models, the process requires a solid understanding of machine learning principles and software development practices. By following these steps, you can leverage the power of LLMs to create innovative and useful applications.