LLM Course and tutorial | PDF

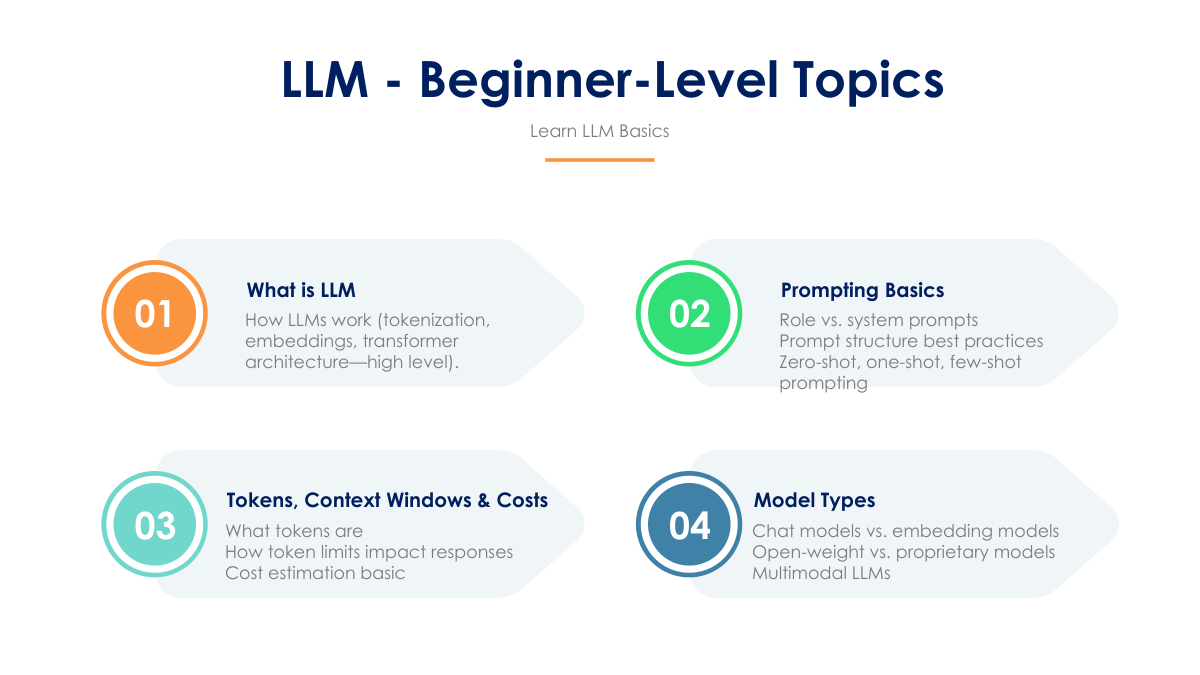

LLM TUTORIAL 1

LLM TUTORIAL 1 |

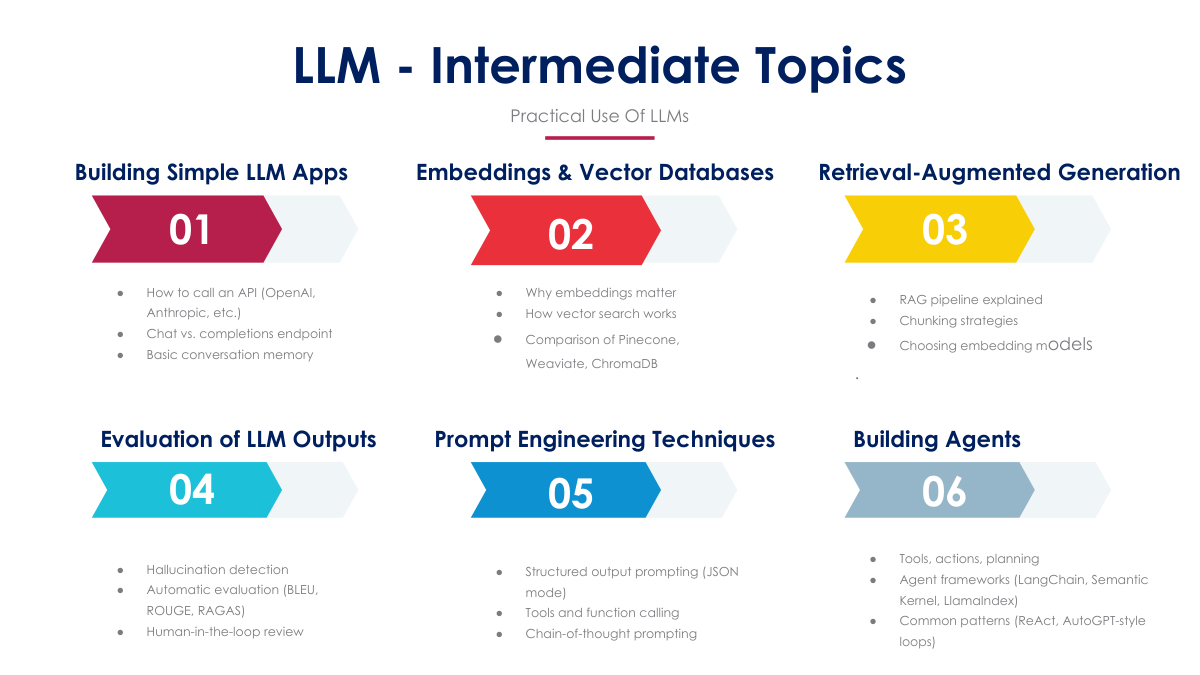

LLM TUTORIAL 2

LLM TUTORIAL 2 |

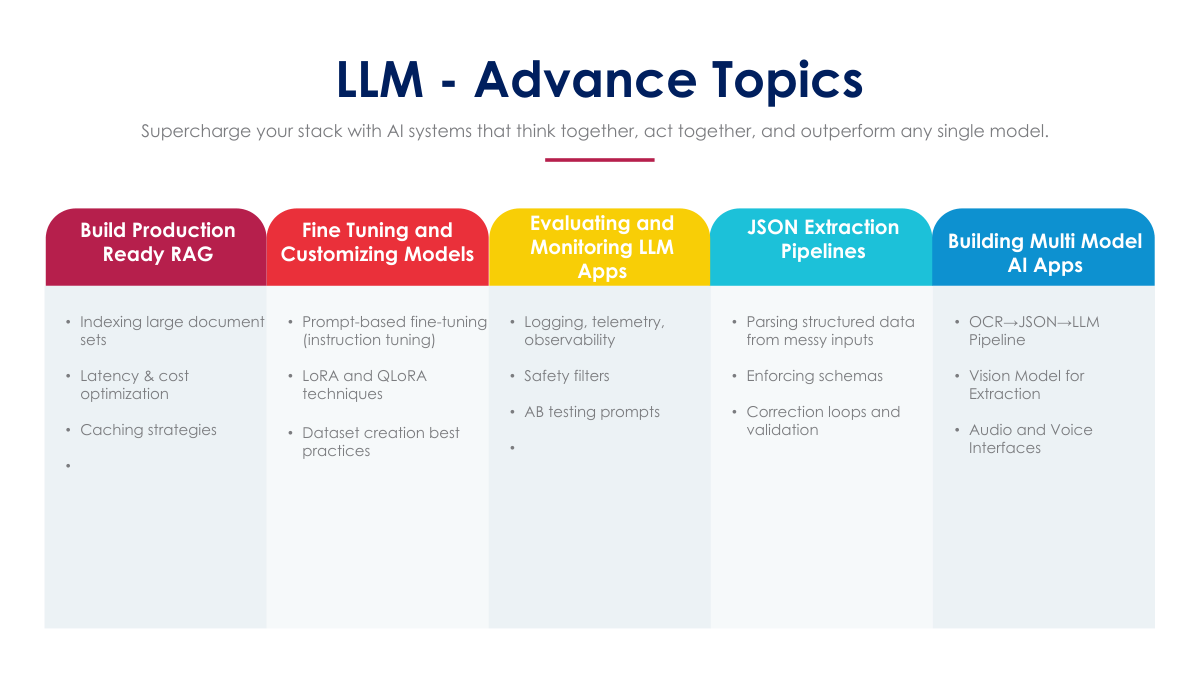

LLM TUTORIAL 3

LLM TUTORIAL 3 |

LLM TUTORIAL 4

LLM TUTORIAL 4 |

Large Language Models (LLMs)

LLMs are a type of artificial intelligence (AI) capable of processing and generating human-like text in response to a wide range of prompts and questions. Trained on massive datasets of text and code, they can perform various tasks such as:

Generating different creative text formats: poems, code, scripts, musical pieces, emails, letters, etc.

Answering open ended, challenging, or strange questions in an informative way: drawing on their internal knowledge and understanding of the world.

Translating languages: seamlessly converting text from one language to another.

Writing different kinds of creative content: stories, poems, scripts, musical pieces, etc., often indistinguishable from human-written content.

Retrieval Augmented Generation (RAG)

RAG is a novel approach that combines the strengths of LLMs with external knowledge sources. It works by:

Retrieval: When given a prompt, RAG searches through an external database of relevant documents to find information related to the query.

Augmentation: The retrieved information is then used to enrich the context provided to the LLM. This can be done by incorporating facts, examples, or arguments into the prompt.

Generation: Finally, the LLM uses the enhanced context to generate a response that is grounded in factual information and tailored to the specific query.

RAG offers several advantages over traditional LLM approaches:

Improved factual accuracy: By anchoring responses in real-world data, RAG reduces the risk of generating false or misleading information.

Greater adaptability: As external knowledge sources are updated, RAG can access the latest information, making it more adaptable to changing circumstances.

Transparency: RAG facilitates a clear understanding of the sources used to generate responses, fostering trust and accountability.

However, RAG also has its challenges:

Data quality: The accuracy and relevance of RAG's outputs depend heavily on the quality of the external knowledge sources.

Retrieval efficiency: Finding the most relevant information from a large database can be computationally expensive.

Integration complexity: Combining two different systems (retrieval and generation) introduces additional complexity in terms of design and implementation.

Prompt Engineering

Prompt engineering is a crucial technique for guiding LLMs towards generating desired outputs. It involves crafting prompts that:

Clearly define the task: Specify what the LLM should do with the provided information.

Provide context: Give the LLM enough background knowledge to understand the prompt and generate an appropriate response.

Use appropriate language: Frame the prompt in a way that aligns with the LLM's capabilities and training data.

1-what-is-a-large-language-mo 10-retrieval-augmented-genera 11-how-to-build-applications- 12-llms-for-document-understa 13-security-and-privacy-conce 14-llms-in-regulated-industri 15-cost-optimization-for-llm- 16-the-role-of-memory-context 2-how-llms-work-pretraining-f 3-top-llms-in-2025-gpt-4-clau