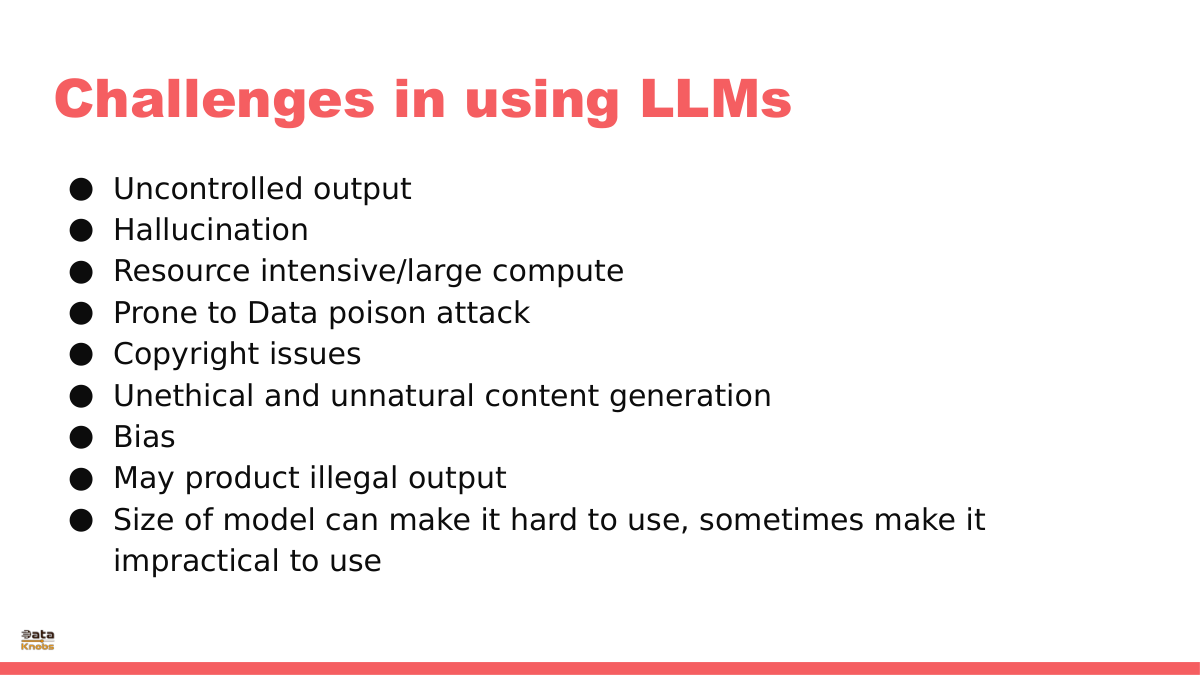

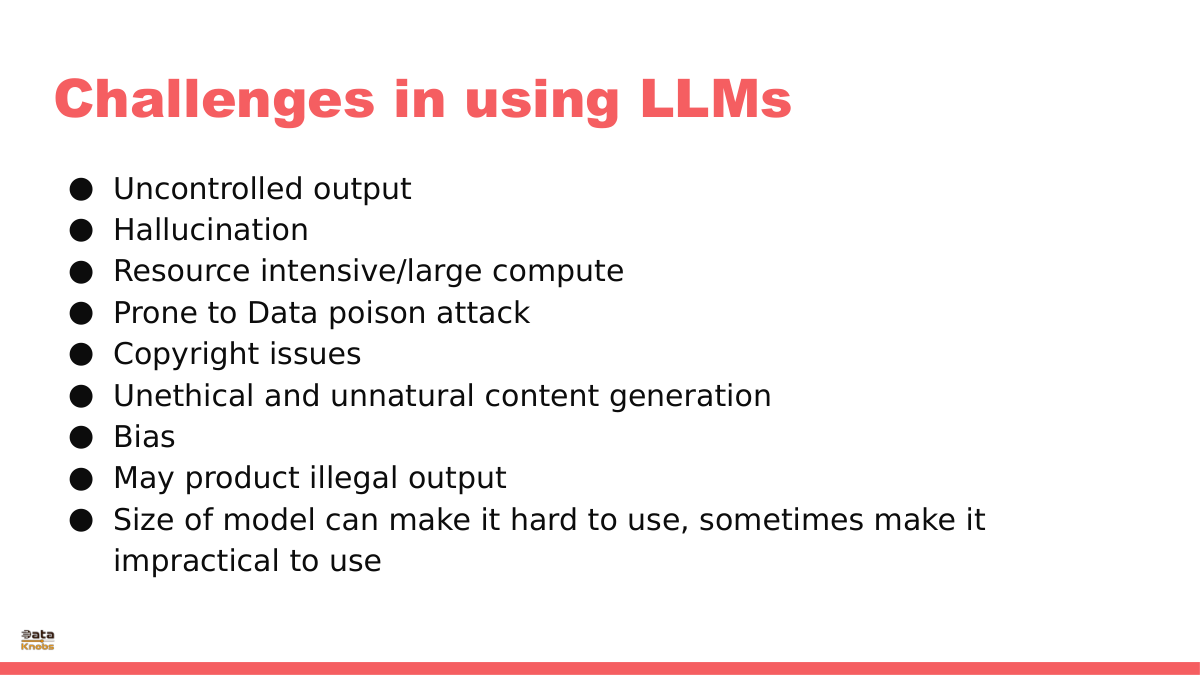

Challenges in Using LLM | Uncontrolled Data

|

||||||||||||||||||||||

|

||||||||||||||||||||||

Dataknobs delivers real, shipped outcomes across finance, healthcare, real estate, e‑commerce, and more—powered by GenAI, Agentic workflows, and classic ML. Explore detailed walk‑throughs of projects like Earnings Call Insights, E‑commerce Analytics with GenAI, Financial Planner AI, Kreatebots, Kreate Websites, Kreate CMS, Travel Agent Website, and Real Estate Agent tools.

Companies should build data products because they transform raw data into actionable, reusable assets that directly drive business outcomes. Instead of treating data as a byproduct of operations, a data product approach emphasizes usability, governance, and value creation. Ultimately, they turn data from a cost center into a growth engine, unlocking compounding value across every function of the enterprise.

Our structured‑data analysis agent connects to CSVs, SQL, and APIs; auto‑detects schemas; and standardizes formats. It finds trends, anomalies, correlations, and revenue opportunities using statistics, heuristics, and LLM reasoning. The output is crisp: prioritized insights and an action‑ready To‑Do list for operators and analysts.

Dive into slides and a hands‑on guide to agentic systems—perception, planning, memory, and action. Learn how agents coordinate tools, adapt via feedback, and make decisions in dynamic environments for automation, assistants, and robotics.

TOON is a compact, LLM-native data format that removes JSON’s structural noise. It lets you fit 5× more structured data into your model, improving accuracy and reducing cost.

GenAI and Agentic AI accelerate data‑product development: generate synthetic data, enrich datasets, summarize and reason over large corpora, and automate reporting. Use them to detect anomalies, surface drivers, and power predictive models—while keeping humans in the loop for control and safety.

KreateHub turns prompts into reusable knowledge assets—experiment, track variants, and compose chains that transform raw data into decisions. It’s your workspace for rapid iteration, governance, and measurable impact.

A pragmatic playbook for CIOs/CTOs: scope the stack, forecast usage, model costs, and sequence investments across infra, safety, and business use cases. Apply the framework to IT first, then scale to enterprise functions.

Explore practical RAG patterns: unstructured corpora, tabular/SQL retrieval, and guardrails for accuracy and compliance. Implementation notes included.

The Drivetrain approach frames product building in four steps; “knobs” are the controllable inputs that move outcomes. Design clear metrics, expose the right levers, and iterate—control leads to compounding impact.