Vector DB CRUD: The Hurdles

```html

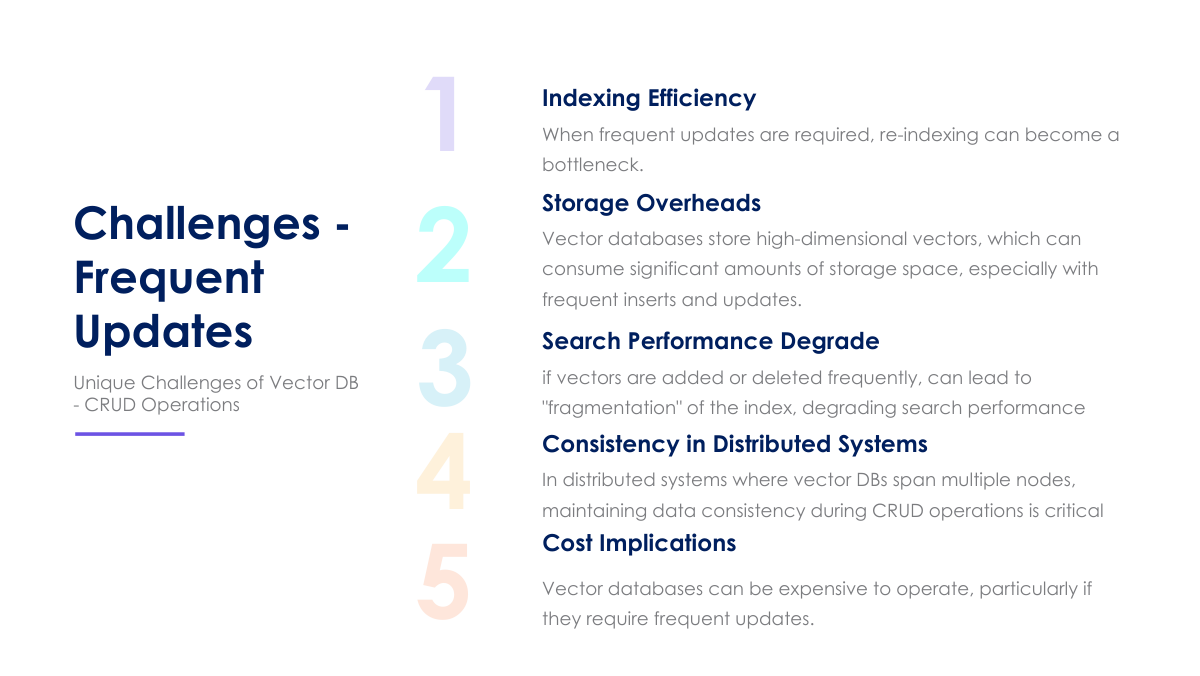

Challenges in Using CRUD Operations on Vector DatabasesVector databases are specialized databases designed to store, manage, and efficiently query high-dimensional vector embeddings. These embeddings represent data points in a vector space, capturing semantic relationships and similarities. Vector databases are crucial for applications like semantic search, recommendation systems, image recognition, and natural language processing. While offering significant advantages in handling vector data, performing Create, Read, Update, and Delete (CRUD) operations on these databases presents unique challenges that must be addressed to ensure optimal performance and reliability. This article delves into these challenges, exploring indexing efficiency, storage overheads, search performance degradation, consistency in distributed systems, and cost considerations.

ConclusionCRUD operations on vector databases present a unique set of challenges related to indexing efficiency, storage overheads, search performance degradation, consistency in distributed systems, and cost. Addressing these challenges requires careful consideration of the specific application requirements, data characteristics, and available resources. By employing appropriate mitigation strategies, organizations can effectively manage vector databases and unlock the full potential of vector embeddings for various applications. Continuous monitoring, performance tuning, and adaptation to evolving data patterns are essential for maintaining optimal performance and cost-effectiveness over time. |

||||||||||||||||||||||||